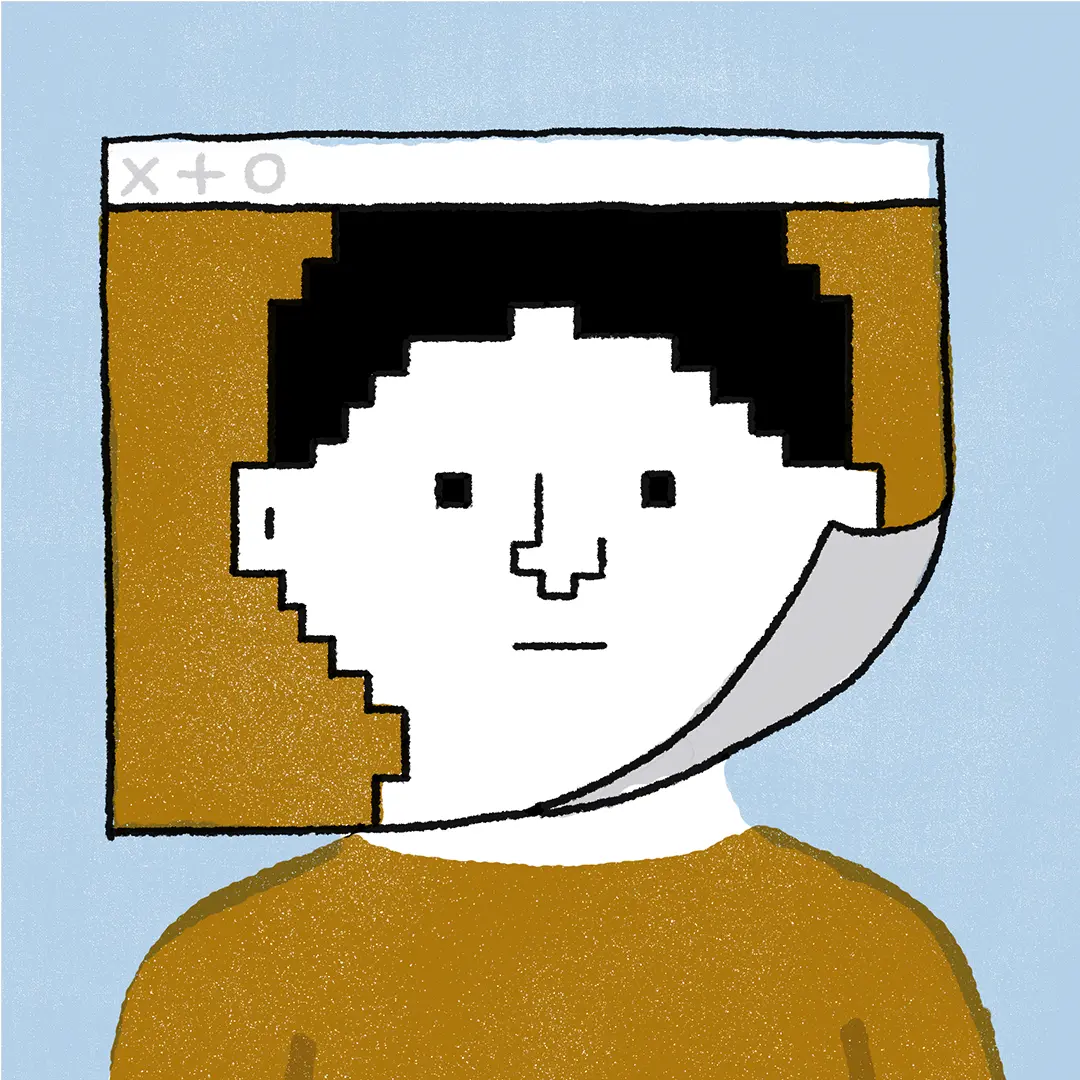

Better remote collaboration will require radically reimagining user interfaces

Published on January 15, 2021

There's a scene from 2002’s neo-noir thriller Minority Report in which Tom Cruise's John Anderton searches through hundreds of video clips, looking for evidence of a crime. But he doesn’t use a familiar desktop computer or laptop. Instead he swipes through clips on an arcing holographic screen using glowing data gloves in lieu of a mouse. When a piece of evidence catches his eye, he makes an intricate hand gesture, pulling up the video.

Even today, some 18 years after the film’s theatrical release, the idea of interacting with holograms in the air feels like science fiction — but it isn’t.

In preparation for the movie, John Underkoffler, a UI researcher, designed and built a prototype gestural language system and supporting computer interface. When Anderton pinches the display to pause a video or twists three fingers to fast-forward, it looks real — and that’s because it is.

After Minority Report premiered, several Fortune 500 companies, including Accenture, Wells Fargo, and Fujitsu, called Underkoffler wanting their own version of the Minority Report interface. Their desire was understandable. Against a backdrop of seismic technological change, user interfaces we use at work have remained stagnant for decades.

The laptops on our desks are hundreds of thousands of times more powerful than the desktop computers we had in the 1980s and 1990s. But the interfaces are more or less the same.

Worse, they’re designed for a rapidly disappearing world. Our existing interfaces assume an in-person working environment, one with lots of in-person interaction between colleagues. But knowledge work is undergoing a sea change toward distributed teams and that old environment is crumbling.

How we interact with technology defines the work we can do and, therefore, how we think about our work. Staid interfaces limit our potential. They hamstring our creativity and stymie our problem-solving capabilities.

Interfaces need to evolve for this new mode of work, or they’ll hold us back by making it difficult to collaborate—ultimately constraining our thinking.

Interfaces frame our interactions

Computation drives most modern technological progress. Every breakthrough from artificial intelligence and gene therapy to space flight and renewable energy owes much of its success to inexorable growth in computing power. But for humans, that poses a challenge. We have evolved to understand the physical world — complete with three dimensions, bodily sensations, and so on. The digital realm bears little resemblance to our own.

How we navigate between the understandable physical world and the incomprehensible digital realm is called an interface, the most proliferous of which is the GUI or Graphical User Interface.

Apple introduced the GUI in the early 1980s with its Macintosh computer. You had a monitor, keyboard, and mouse. You interacted with the system through a two-dimensional interface of windows. That was considered groundbreaking 40 years ago. But since then, innovation has been slow.

“General purpose user interfaces in every application except for gaming have made no strides, no quantum leaps, since 1984,” Underkoffler told VentureBeat in 2018. “That’s the year that Apple turned the command line interface into the GUI, the graphical user interface. Everything that has come after has been a step to the side.”

Indeed, often our interfaces are willfully ignorant of our physical world. That’s important because digital tools, services, and platforms do not exist in isolation. How each interface integrates — or doesn’t integrate — with its surrounding worlds matters.

“The context of experience exerts a great amount of control over determining what knowledge and understanding are formed in that experience,” wrote researchers at the University of Edinburgh. “As is popularly said, 93% of communication is non-verbal, ‘the medium is the message,’ and ‘there is no out-of-context.’”

Academics Christian Freksa, Alexander Klippel, and Stephan Winter provide a handful of examples relating to map software. In each scenario, they manipulate the relationship between spatial environment, digital reproduction, and user. The team argues that providing enough context allows users to solve problems that they couldn’t figure out by either looking at the real world or their digital map.

Other interface failings stem not only from absences, but from unintended consequences of purposeful design features. Computer scientist turned tech philosopher Jaron Lanier has repeatedly criticized the way we interact with the World Wide Web. He told Scientific America that core components, like user interfaces and logins, are “sources of fragmentation to be exploited by others.”

How we interact with technology defines the work we can do and, therefore, how we think about our work. Staid interfaces limit our potential.

He singles out the proliferation of pseudonyms for criticism. Most websites, from social media platforms to news forums, allow users to comment anonymously. Because interfaces allow users to interact behind a mask of anonymity, they empower some to unleash vicious abuse and violent opinions. "Trolling is not a string of isolated incidents," Lanier wrote in his book You Are Not a Gadget, "but the status quo in the online world."

Underkoffler, Lanier, and other tech-industry commentators, as well as academics like Freska, Klippel, and Winter, acknowledge that how we interact with our digital worlds matters. It affects our information consumption and defines how we process it. And all agree that we have barely scratched the surface of interface design.

A brave new world

While GUI is still the dominant interface, that’s not to say researchers aren’t exploring the peripheries. For example, Lanier devoted much of his early career to pioneering work in virtual reality. He’s even credited with coining the term.

He once believed virtual reality interfaces would trigger a revolution in art and communication. Instead of talking over the phone, he predicted that people would meet in virtual reality parties, complete with “strange and exotic” virtual avatars.

“I had this feeling of people living in isolated spheres of incredible cognitive and stylistic wealth,” he told The New Yorker in 2011.

While his vision for virtual reality has yet to fully manifest, his work in the neighboring field of augmented reality did bear fruit. Lanier spent several years at Microsoft working on its motion-based gaming system called Kinect. Instead of manipulating video games with a controller, Kinect matched the user’s real-world movements to their in-game character. If the player jumped in real life, their avatar jumped on the screen. Reflecting on his work, Lanier said Kinect “expand[ed] what it means to think.”

The relationship between physical and digital space is something Underkoffler has grappled with, too.

“The problem, as we see it, has to do with a single, simple word: space—or a single, simple phrase: real-world geometry,” Underkoffler said during a 2010 TED talk. “Computers and the programming languages that we talk to them in, that we teach them in, are hideously insensate when it comes to space. They don't understand real-world space.”

Platforms such as Mezzanine, developed by Underkoffler’s company Oblong, address the disconnect between physical and virtual space. For example, Mezzanine allows people to interact with technology in three dimensions, rather than a two-dimensional representation of space.

One company uses Oblong’s technology to design and simulate reservoirs. Instead of building the system via a keyboard and mouse, its engineers interact with with their hands. If they want to move a wellhead 500 meters to the north, they can use Minority Report-esque data gloves to pick up the virtual model and shift it to a new location.

In another example, Oblong engineer Pete Hawkins took an Excel sheet with data showing seismic activity, and represented it visually on a projection of Earth in Mezzanine. Different colors denote different depths. Larger dots mark more significant movements.

“Our goal is to get beyond rows and columns of data,” Hawkins explained. “In an Excel spreadsheet, our experience with the data is severely limited. By putting this in human terms, we get more of a human take.”

Another challenge facing our interfaces is collaboration. Today, work is often the product of many people’s efforts — but their computers are still siloed. While you can encourage computers to work together via networks, they are, on a fundamental level, standalone machines.

This was bearable in the office where colleagues could physically walk between desks and look at the same display. But with an increasingly distributed workforce, that doesn’t really work anymore. Screen-sharing functionality and collaboration software attempts to bridge the gap — but does so poorly.

To enable a new era of remote work, experimental interfaces will need to expand beyond the traditional one-to-one pairings between human and machine. Instead of building individual digital worlds for each employee, they may need to create large shared workspaces where colleagues can collaborate together in the same space, sharing content, ideas, and work.

Thirty-six years and counting

Apple debuted its graphical user interface in 1984 — some 36 years ago. If you presented someone from Gen Z with a Macintosh 128K, the first Apple computer with a GUI, they would likely be able to find their way around. Some would say that’s because the interface is intuitive and easy to use. But interface belligerents such as Underkoffler and Lanier argue that it’s because our contemporary interfaces are merely high-resolution copies.

Instead of innovating and experimenting, we’ve stuck with what worked because it … worked.

Just working isn’t good enough, especially with our overnight transition to distributed work. In a remote universe, our interfaces need to evolve to foster human connection. After all, we’re social animals, and we won’t thrive, innovate, or feel happy if we work in complete isolation.

“We're, as human beings, the creatures that create,” Underkoffler said. “We should make sure that our machines aid us in that task and are built in that same image.”